In the rapidly maturing landscape of AI system design, the debate over whether the ReAct pattern has become obsolete misses the fundamental architectural reality of 2026: ReAct is not dead, but it has transitioned from a standalone architecture to the atomic unit of execution within sophisticated Multi-Agent Collaboration ecosystems. While the single-loop "Reason and Act" methodology remains essential for individual tasks, relying on a solitary generalist agent to manage complex, multi-step workflows has proven to be an unscalable anti-pattern, plagued by context pollution and inevitable cognitive drift. The industry has therefore pivoted toward multi-agent orchestration, a paradigm that mirrors the reliability of microservices by decomposing monolithic responsibilities into specialized, interacting personas. For senior engineers and system architects, understanding this shift is critical, as the focus moves from simple prompt engineering to designing resilient agentic workflow structures where distinct planners, executors, and reviewers operate under a shared protocol. By leveraging hierarchical agent architecture and robust inter-agent communication, developers can eliminate the self-correction bias inherent in single-agent loops, ensuring that execution is strictly separated from verification. Mastering these coordination mechanisms and agent consensus protocols is the defining requirement for the next generation of production AI, transforming fragile prototypes into fault-tolerant systems capable of handling the nuance and scale that modern enterprise applications demand.

Is ReAct Obsolete? The Shift to Multi-Agent Systems

If you are asked in a 2026 system design interview whether the ReAct (Reason + Act) pattern is obsolete, the nuance is critical: ReAct is not obsolete; it has become atomic.

While the single-loop ReAct pattern remains the fundamental execution unit for individual agents, relying on a single generalist agent to handle complex, multi-step workflows has hit a performance ceiling. The industry has shifted toward Multi-Agent Collaboration to solve problems where context limits and cognitive overload degrade the performance of a solitary LLM.

Defining Multi-Agent Collaboration

For the purpose of technical interviews, you should define this architecture not just as "many agents," but as a structured system comprising three non-negotiable pillars.

Definition: Multi-Agent Collaboration

An architectural pattern where multiple independent agents work towards a shared objective, characterized by:

1. Specialized Agents: Distinct personas (e.g., Planner, Coder, Reviewer) with narrowed scopes and tools, rather than one generalist trying to do everything.

2. Shared Protocol: A defined schema for message passing and state management, ensuring agents can communicate intent and results effectively.

3. Coordination Mechanism: A control flow (such as a hierarchical supervisor or a flat debate loop) that orchestrates the sequence of operations and resolves conflicts.

Single-Agent vs. Multi-Agent: The Architectural Shift

The move from Single-Agent ReAct to Multi-Agent systems mirrors the evolution from monolithic software to microservices. In a single-agent setup, the model acts as a "God Object," holding the entire state and logic in one fragile context window. Multi-agent systems decouple these responsibilities.

Feature | Single-Agent ReAct | Multi-Agent Collaboration |

|---|---|---|

Role | Generalist: Handles planning, execution, and validaton simultaneously. | Specialist: "Division of Labor" where agents focus on narrow, bounded execution. |

Context | Global & Bloated: The entire history is stuffed into one window, leading to "context pollution." | Scoped & Ephemeral: Agents only see the context relevant to their specific sub-task. |

Reliability | Fragile: If the agent hallucinates a step, the entire loop often fails without recovery. | Resilient: A separate "Reviewer" agent can catch errors and trigger a retry loop. |

Design Principle | Looping: | Orchestration/Choreography: Separating strategy from execution to reduce cognitive load. |

In high-level system design, this shift allows engineers to treat individual ReAct loops as reliable components within a larger, fault-tolerant graph. The following sections will explore why this separation is necessary for scaling and detail the specific design patterns used to implement it.

Why Single-Agent ReAct Fails at Scale

While the ReAct (Reason + Act) loop was the breakthrough that popularized agentic AI, relying on a single agent for complex, multi-step workflows has become an architectural anti-pattern in 2026. For production-grade systems, the "Generalist Agent" model hits a hard ceiling driven by three specific failure modes: Context Pollution, Role Confusion, and Hallucination Loops.

1. Context Pollution (The "Infinite Scroll" Problem)

In a single-agent ReAct loop, every thought, tool output, and observation is appended to a single, growing context window. As the history lengthens, the signal-to-noise ratio drops. The agent begins to struggle with "attention drift," where irrelevant details from Step 1 interfere with the decision-making logic needed for Step 10.

- The Technical Limit: Even with massive context windows (1M+ tokens), the reasoning quality degrades as the prompt becomes cluttered. The agent forgets the original constraints or gets distracted by previous tool errors.

- The Symptom: The agent successfully completes complex sub-tasks but fails to format the final JSON output correctly because the formatting instruction is buried 50 messages back.

2. Role Confusion (The "Jack-of-All-Trades" Fallacy)

A single agent cannot effectively effectively switch between conflicting personas—such as "Creative Writer" and "Strict Auditor"—within the same context. When you ask one LLM instance to generate code and then immediately verify it, it suffers from self-correction bias. It tends to overlook its own errors because the same underlying state that generated the bug is being used to find it.

As noted in architectural discussions on hierarchical agent systems, a common failure mode is the lack of separation between execution and verification. Without distinct boundaries, the agent becomes a "silent co-author" of its own mistakes, rationalizing bad logic rather than catching it.

3. Hallucination Loops (The Echo Chamber)

When a single agent encounters a runtime error or an unexpected tool output, it often enters a "Hallucination Loop." Without external feedback or a fresh perspective, the agent tries to "brute force" a solution, often repeating the same failed action or inventing non-existent library functions to bypass the error.

Deep Agent Insight: Classic agents often run a simple loop (think → act → observe) that works for transactional queries but breaks down on multi-hour tasks due to looping without recovery mechanisms.

Concrete Example: The "Code Fix" Scenario

To visualize why the industry is shifting, consider a scenario where an agent must write a Python script that interacts with a deprecated API.

Feature | Single-Agent ReAct Pattern | Multi-Agent Collaboration Pattern |

|---|---|---|

Workflow | The agent writes code, gets a 404 error, and assumes the URL is wrong. It retries 5 times with random URL variations, eventually hallucinating a "fix" that doesn't work. | Agent A (Coder) writes the code. Agent B (Researcher) sees the 404, checks the docs, and identifies the API is deprecated. Agent B informs Agent A to switch libraries. |

Failure Mode | Context Pollution: The error logs verify the confusion.<br>Hallucination Loop: Stuck in a retry cycle. | Specialization: The Researcher provides "Ground Truth" that breaks the Coder's loop. |

Outcome | High latency, high token cost, failure. | Higher accuracy, predictable recovery. |

In summary, single-agent systems lack the structural friction necessary for quality control. They optimize for continuity, whereas robust engineering requires the distinct separation of concerns—writing vs. reviewing, planning vs. executing—that only multi-agent architectures provide.

The 3 Core Multi-Agent Design Patterns

By 2026, vague descriptions of "agents talking to each other" are no longer sufficient for technical interviews. The industry has converged on a standard architectural vocabulary to describe how agents coordinate. The distinction between these patterns lies primarily in their control flow and dependency structure: does the system move linearly, does it branch dynamically under supervision, or does it iterate through peer consensus?

When designing a Multi-Agent System (MAS), you will typically choose from three foundational topologies.

1. Sequential Handoffs (The Pipeline)

This is the most deterministic pattern, often described as a "Chain of Agents." It is ideal for workflows where the output of one agent serves strictly as the input for the next, with no need for feedback loops or dynamic replanning.

- Structure:

Agent A (Output) -> Agent B (Input) -> Agent C (Result) - Control Flow: Hardcoded state transitions.

- Use Case: Automated content pipelines (e.g., Researcher → Writer → Translator).

- Trade-off: High reliability but low flexibility. If the Researcher hallucinates, the Writer amplifies the error downstream.

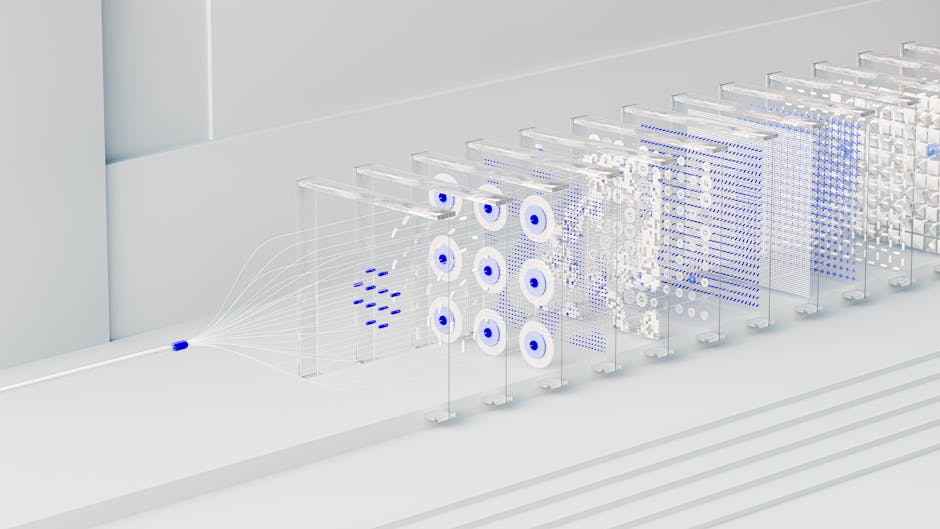

2. Hierarchical Collaboration (The Supervisor)

The Hierarchical pattern, often called a "Vertical Architecture," introduces a central orchestrator or "Supervisor" agent. This pattern decouples planning from execution.

- Structure: A root

Supervisoragent breaks down a complex user goal into sub-tasks and delegates them to specializedWorkeragents (e.g., a Coder, a Reviewer, a Tester). - Control Flow: The Supervisor manages the state and decides which worker to call next based on the previous worker's output. As noted in industry analysis, decisions cascade down the hierarchy while information bubbles up, mirroring human organizational structures.

- Key Advantage: It solves the "context pollution" problem. Workers only see the specific instructions for their sub-task, while the Supervisor maintains the global context.

- Interview Tip: Mention that this is the preferred architecture for enterprise applications requiring strict guardrails, as the Supervisor can enforce policy checks before and after every worker action.

3. Joint Collaboration (The Network/Flat)

In a Joint or "Horizontal" architecture, agents interact as peers without a central supervisor. This pattern relies on dynamic consensus rather than directed command.

- Structure: Multiple agents (often with different personas, such as a "Proponent" and an "Opponent") share a conversation history and critique each other's work.

- Control Flow: Emergent. The conversation ends when a consensus condition is met or a max-turn limit is reached.

- Use Case: Complex reasoning tasks, creative brainstorming, or reducing hallucinations through adversarial debate. Research suggests that horizontal architectures are best for open-ended or creative tasks where no single "correct" path exists.

- Trade-off: Higher latency and token costs due to the iterative nature of the dialogue.

Summary: Selecting the Right Pattern

In an interview, you should justify your choice of pattern based on the task dependency:

Feature | Sequential | Hierarchical | Joint (Flat) |

|---|---|---|---|

Dependency | Linear (A B) | Dynamic (A ?) | Cyclic / Iterative |

Orchestration | Hardcoded Code | LLM Supervisor | Protocol / Voting |

Best For | ETL, Transformations | Complex Problem Solving | Creative / QA |

Pattern 1: Hierarchical Orchestration (The Supervisor)

The Hierarchical Orchestration pattern, often referred to as the "Boss-Worker" topology, organizes agents into a structured tree rather than a flat mesh. In this architecture, a central Supervisor (or Orchestrator) functions as the decision-making node, while Worker agents execute specific, bounded tasks.

How It Works

The Supervisor acts as a state machine. It does not perform the actual work (e.g., writing code or searching the web); instead, it breaks down the user's high-level goal into sub-tasks and delegates them to specialized workers.

- State Management: The Supervisor maintains the global context and conversation history.

- Routing: Based on the current state, the Supervisor selects the next appropriate worker.

- Aggregation: The Supervisor receives the output from a worker, updates the state, and decides whether to route to another worker, retry, or return the final answer to the user.

This separation of concerns is critical for scaling. As noted in discussions on hierarchical mental models, strategy is separated from execution. The Supervisor focuses on planning and evaluation, while workers focus on execution. This reduces the cognitive load on individual workers, as they only need to process the specific context relevant to their sub-task rather than the entire conversation history.

Use Case: Software Development Lifecycle

A classic interview example is a "Feature Development Team."

- Supervisor (Tech Lead): Receives the request: "Build a Python script to scrape stock prices."

- Worker A (Planner): Breaks the request into steps and requirements.

- Worker B (Coder): Writes the actual Python code based on the Planner's specs.

- Worker C (Reviewer): Executes the code and checks for errors.

In this flow, the Coder never talks directly to the Reviewer. The Supervisor takes the Coder's output, passes it to the Reviewer, and if the Reviewer finds a bug, the Supervisor routes the error logs back to the Coder. Galileo AI describes this as a system where "decisions cascade down the hierarchy while information bubbles up."

Common Pitfalls

While robust, this pattern introduces specific failure modes that candidates should mention:

- The Bottleneck: The Supervisor is a single point of failure. If the Supervisor hallucinates the state or fails to parse a worker's output (e.g., the worker returns natural language instead of the expected JSON), the entire chain breaks.

- Context Overflow: Since the Supervisor sees everything, long-running tasks can exceed the Supervisor's context window, leading to "forgetfulness" regarding the original constraints.

- Latency: Every interaction requires a round-trip through the Supervisor, which acts as a middleman, potentially increasing latency compared to direct agent-to-agent handoffs.

Pattern 2: Sequential Handoffs (The Assembly Line)

While the Supervisor pattern centralizes control, the Sequential Handoff pattern (often referred to as a "Chain" or "Pipeline") adopts a linear, decentralized topology. In this architecture, there is no single "brain" managing the state. Instead, the logic is hard-coded into the sequence itself: Agent A completes a task and passes its entire context and output directly to Agent B, acting as the trigger for the next step.

The Workflow: Deterministic Pipelines

This pattern mimics a traditional manufacturing assembly line or a Unix pipe (|). It is best suited for predictable workflows where the steps are known in advance and rarely deviate.

- Upstream Action: The first agent receives the user prompt, executes its specific function (e.g., data retrieval), and appends its result to the conversation history.

- State Transfer: The modified state is passed to the next agent in the chain.

- Downstream Execution: The subsequent agent treats the previous agent's output as its input instructions, performs its task, and passes it forward.

Use Case: The Content Publishing Pipeline

A classic interview example for this pattern is an automated content generation system. Unlike a general-purpose assistant, this system has a fixed definition of "Done."

- Step 1: Research Agent. Scrapes the top 5 search results for a keyword and summarizes key technical facts.

- Step 2: Drafting Agent. Receives the summary (not the raw HTML) and generates a 1,000-word draft following a specific tone guide.

- Step 3: SEO Agent. Scans the draft, inserts semantic keywords, and optimizes headers.

- Step 4: Formatting Agent. Converts the text into valid Markdown or HTML with syntax highlighting for code blocks.

In this scenario, the SEO Agent does not need to know about the Research Agent; it only cares that it receives text from the Drafting Agent.

Limitations and Engineering Trade-offs

While this pattern is easier to implement due to the lack of complex routing logic, it introduces significant brittleness, a point emphasized in discussions on orchestration vs. choreography.

- Propagation of Error: If the Drafting Agent hallucinates or produces a blank output, the SEO Agent will dutifully try to "optimize" the garbage input. There is typically no mechanism for the chain to "step back" and request a rewrite.

- Lack of Error Recovery: Unlike hierarchical models where a Supervisor can catch a failure and re-assign the task, a sequential chain typically fails completely if one link breaks. As noted in distributed system design, debugging can be harder because the flow logic is implicit in the connections rather than explicit in a controller.

- Context Window Inflation: As the state passes from Agent A to Z, the context window accumulates all intermediate artifacts. Without careful "context pruning" (passing only the summary rather than the full chat history), the final agents may suffer from latency issues or "lost in the middle" retrieval failures.

Interview Verdict: Use Sequential Handoffs for high-volume, low-variance tasks (like ETL jobs or document processing) where the cost of occasional failure is lower than the cost of building a complex orchestrator.

Pattern 3: Joint Collaboration (Debate & Consensus)

While Hierarchical Orchestration focuses on management and Sequential Handoffs focus on efficiency, Joint Collaboration (often referred to as Multi-Agent Debate) prioritizes accuracy and reasoning quality. In this decentralized topology, multiple agents act as peers, critiquing and refining a single output through multi-turn dialogue before reaching a final decision.

The Core Mechanism: Adversarial & Cooperative Loops

Unlike the linear "fire-and-forget" of an assembly line, this pattern relies on a cyclical workflow. Agents are assigned distinct personas—such as "Proposer," "Reviewer," or "Devil's Advocate"—to introduce diversity in reasoning. The system does not accept the first answer; instead, it forces agents to defend or revise their outputs based on peer feedback.

This approach mirrors decentralized choreography in distributed systems, where there is no single "brain" dictating every step, but rather a consensus emerges from the interactions of autonomous components.

Pseudo-code for a Debate Loop:

def debateloop(question, maxturns=3):

# Initialize agents with distinct prompts

agenta = Agent(role="Optimist", goal="Find supporting evidence")

agentb = Agent(role="Pessimist", goal="Find logical fallacies")

history = [question]

for in range(maxturns):

# Agent A proposes or refines

responsea = agenta.generate(history)

history.append(f"Agent A: {responsea}")

# Agent B critiques

responseb = agentb.generate(history)

history.append(f"Agent B: {responseb}")

# Check for convergence (e.g., if Agent B says "No issues found")

if checkconsensus(responsea, responseb):

return extractfinalanswer(responsea)

# Fallback if no consensus is reached

return summarize_conflict(history)Consensus Protocols

A critical engineering challenge in this pattern is determining when to stop. Without a robust termination condition, agents can hallucinate in an infinite loop of polite agreement or stubborn disagreement. Common protocols include:

- Majority Vote: Useful when 3+ agents are involved. The system queries all agents for a final structured vote (e.g., JSON output) and selects the most common answer.

- Supervisor Tie-Breaker: A lightweight "Judge" agent (often a stronger model like GPT-4o) reviews the debate history solely to declare a winner or merge the best parts of conflicting answers.

- Threshold Termination: The loop ends if the semantic similarity between two consecutive turns exceeds a certain threshold, indicating convergence.

Scenario: High-Stakes Fact-Checking

This pattern is overkill for simple queries (e.g., "What is the weather?") but essential for high-stakes reasoning where hallucination is unacceptable.

- Use Case: Financial Report Analysis.

- Workflow:

- Agent A (Analyst) extracts key metrics from a PDF.

- Agent B (Auditor) verifies the extracted numbers against the raw text and flags discrepancies.

- Agent A must correct the error or provide a citation to defend the original number.

- The loop continues until Agent B validates the accuracy.

Trade-offs

- Pros: Significantly reduces hallucination rates; produces more nuanced, well-reasoned outputs.

- Cons: High latency and token cost (3x-5x more tokens per query).

- Engineering Note: Requires strict communication protocols (like standardized JSON schemas) to ensure agents can parse each other's critiques without parsing errors derailing the debate.

The "Glue" Logic: Communication & Conflict Resolution

In a production environment, agents do not merely "chat" with each other in natural language. While the internal reasoning of an LLM is textual, the inter-agent communication must be structured, deterministic, and parseable. A common interview trap is describing multi-agent systems as a group of chatbots in a room. In reality, successful implementations rely on rigid JSON contracts and state machines.

Structured Data Exchange (JSON Schemas)

Agents require a standardized protocol to understand intent, payload, and routing. Instead of sending a raw string like "Please fix this bug," a "Manager" agent sends a structured JSON payload to a "Coder" agent. This ensures the receiving agent knows exactly which file to modify and the context of the request.

Modern implementations often align with emerging standards like the Model Context Protocol (MCP) or JSON-RPC patterns. A typical inter-agent message payload looks less like a chat log and more like an API request:

{

"senderid": "architectagent01",

"recipientrole": "backenddeveloper",

"messagetype": "taskassignment",

"priority": "high",

"payload": {

"taskid": "T-1024",

"context": "User cannot login via OAuth.",

"acceptancecriteria": [

"Fix 401 error in authcontroller.py",

"Add unit test for token expiration"

]

},

"metadata": {

"maxretries": 3,

"sessionid": "sess_89a7"

}

}By using JSON schemas, you decouple the LLM's reasoning from the execution environment. The sending agent generates the JSON, a runtime layer validates it against a schema (e.g., Pydantic in Python), and only valid requests are forwarded to the receiving agent.

Orchestration vs. Collaboration (Choreography)

A critical architectural distinction—often tested in system design interviews—is the difference between Orchestration and Collaboration (often called Choreography).

- Orchestration (Explicit/Scripted): A central "Controller" or "Router" agent dictates the flow. It explicitly calls Agent A, waits for the result, and then calls Agent B. This is similar to a rigid workflow engine. It is easier to debug but harder to scale if the logic becomes too complex.

- Collaboration (Emergent/Prompt-Driven): There is no central controller. Agents broadcast messages or address each other directly based on their system prompts. For example, a "Researcher" agent might autonomously decide to query a "Writer" agent once it has gathered enough data. This pattern is more flexible but prone to loops and unpredictability.

As noted in discussions on Orchestration vs. Choreography, orchestration represents control from a single perspective, whereas collaboration implies that agents know how to interact with peers without a central authority.

Conflict Resolution & Deadlocks

What happens when a "Reviewer" agent rejects a "Coder" agent's pull request 5 times in a row? In a purely autonomous loop, this results in an infinite cost spiral where agents politely argue forever.

To prevent this, you must implement deterministic conflict resolution logic in the "glue" code outside the LLM:

- Max Turns / Time-to-Live (TTL): Every conversation thread must have a hard limit (e.g.,

max_turns=10). If the goal isn't achieved by then, the loop terminates with an error. - Escalation Hierarchy: If a "Reviewer" rejects a task twice (

rejection_count >= 2), the system should automatically route the conversation to a "Supervisor" agent or trigger a Human-in-the-Loop (HITL) event. - Consensus Mechanisms: For critical decisions, you might use a voting pattern where three agents propose solutions, and a separate logic layer selects the most common answer or the one with the highest confidence score.

Key Takeaway for Interviews: When asked how agents collaborate, focus on the protocol (JSON/MCP), the topology (Orchestrator vs. Choreography), and the safety rails (Max Turns/Escalation) that prevent runaway costs.

Engineering Reality: Latency, Costs, and Failure Modes

在架构图上,多智能体协作(Multi-Agent Collaboration)看起来优雅且强大;但在生产环境中,它往往是延迟和成本的噩梦。面试官询问这一部分,通常是为了考察候选人是否有真实的落地经验(Experience),而不仅仅是跑通了 Demo。

与单体 ReAct 模式相比,多智能体系统引入了大量的网络开销和上下文冗余。如果设计不当,不仅性能会退化,甚至可能出现“一夜之间烧掉半个月预算”的极端情况。

1. 成本与延迟的乘数效应 (The Multiplier Effect)

在单智能体系统中,延迟主要取决于 LLM 的生成速度(Tokens/sec)。而在多智能体系统中,延迟和成本呈现乘数级增长。

- 冗余上下文传递:为了让协作方理解任务,Agent A 往往需要把大量的历史上下文(Context)打包发送给 Agent B。根据 Datagrid 的工程经验,最大的成本陷阱在于“话痨”智能体(Chatty Agents)。例如,一个负责发票处理的 Agent 完成工作后,向其他三个 Agent 发送了详细的全量日志,导致 Token 消耗在毫无意义的握手和状态同步中指数级上升。

- 不可预测的调用链:HockeyStack 在优化多智能体系统时发现,依赖通用的大模型处理所有步骤会导致巨大的计算开销。他们曾经历过“单条线索处理延迟超过 30 秒”的情况,且由于调用链的不透明,调试变得极度困难。通过将通用 Agent 拆解为无状态的(Stateless)、原子化的专用 Agent,他们最终将延迟降低了 72%,成本降低了 54%。

2. 致命的“无限循环” (Infinite Loops)

多智能体系统最尴尬的故障模式是死循环。这通常发生在两个 Agent 互相等待对方确认,或者过度礼貌的时候。

- 礼貌死循环(Politeness Loop):

- Agent A: "Here is the code."

- Agent B: "Looks good, thank you!"

- Agent A: "You're welcome. Let me know if you need anything else."

- Agent B: "I will. Thanks again."

- ...(无限消耗 Token 直到触发上下文上限)

- 争论死循环(Argument Loop):Reviewer Agent 提出修改意见,Coder Agent 修改后提交,Reviewer 认为未达标再次驳回。如果没有引入仲裁机制或强制终止条件,这个过程会永久持续下去。

正如 LinkedIn 上的工程讨论所指出的,防止这种循环不仅是为了省钱,更是为了系统的可靠性。必须在架构层面引入熔断机制(Circuit Breakers)。

3. 工程熔断与防御策略

在面试中,你应当展示具体的防御代码逻辑或配置策略,而非仅仅谈论概念:

- 硬性轮次限制(Max Turns / Timeouts):永远不要信任 LLM 会自动停止。必须在编排层(Orchestrator)设置

MAX_ITERATIONS(例如 10 次)。一旦达到上限,强制终止并抛出异常或返回当前最佳结果。Glean 的技术文档建议,除了轮次限制,还应针对实时系统设置严格的超时时间(Timeouts),确保系统不会因为某个 Agent 的卡顿而整体挂起。 - 状态机护栏(State Machine Guardrails):使用有限状态机(FSM)强制规定流转路径。例如,

Reviewer在连续拒绝 3 次后,状态必须强制流转至Human_Escalation(人工介入)或Fail_Safe,而不是继续回调Coder。 - 检测重复输出:在写入上下文前,计算当前输出与历史消息的语义相似度或 Hash 值。如果发现 Agent 在重复相同的话术,立即触发熔断。

决策矩阵:何时放弃多智能体?

多智能体并非银弹。在面试的最后,通过一个决策矩阵展示你的技术判断力(Judgment),说明何时应该退回到简单的 Prompt Chain(单体智能体)。

评估维度 | 推荐方案:Prompt Chain (Single Agent) | 推荐方案:Multi-Agent Collaboration |

|---|---|---|

任务复杂度 | 线性、步骤固定(如:数据提取 -> 翻译 -> 存库) | 非线性、需要发散思维或多角度辩论(如:软件开发、法律文书起草) |

延迟容忍度 | 低延迟要求 (< 3秒)。单次调用即可完成。 | 高延迟容忍 (> 10秒)。允许异步处理和多次推理往返。 |

成本敏感度 | 预算有限,需严格控制 Token。 | 预算充足,愿意用 Token 换取更高质量的推理(Accuracy)。 |

上下文依赖 | 任务上下文简单,无需频繁切换视角。 | 任务需要不同领域的专业知识(如 Python 专家 + SQL 专家协作)。 |

故障风险 | 逻辑简单,易于调试和复现。 | 交互涌现(Emergent),难以复现特定故障路径,运维成本高。 |

总结:在 2026 年的面试中,能够清晰阐述“如何构建 Agent”只能拿到及格分;能够通过上述矩阵解释“何时不使用 Agent”,并详细列举生产环境中的熔断策略,才是体现 Senior 工程师价值的关键。